The Threads dilemma: a lesson in cooperative decision-making

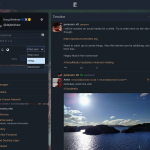

As a member of social.coop, a cooperative social network that uses Mastodon, I’ve recently observed our community grappling with a significant decision. Meta, the company behind Facebook, Instagram, and WhatsApp, announced that their new platform, Threads, would join the ‘Fediverse‘ — a collective of instances compatible with federated social network protocols like ActivityPub. This sparked a debate within social.coop about whether to block any federated instances created by Meta, a decision that had to be made democratically.

However, the way this decision was introduced was problematic. A member, who hadn’t been active in prior discussions, suddenly proposed a vote. This rushed approach led to a low turnout, with only 68 out of several thousand members voting. The result was inconclusive and not representative of the community.

What followed was a convoluted discussion with multiple threads (no pun intended!) that were hard to follow. Many comments were made without considering previous discussions. Two more ‘formal’ proposals were brought forward, but neither provided a clear path forward. The lack of structure and process was evident and concerning.

The issue escalated to the point where some members suggested splitting the co-op along the lines of those for and against defederating with Threads. This is a situation we should strive to avoid. Cooperatives work best when there are defined and well-understood processes, leading to productive discussions and timely decisions. Unfortunately, this wasn’t the case in our response to Meta’s announcement about Threads.

My concern isn’t so much about the decision to defederate from Threads, but rather the process by which we arrived at this point. The discussion was exhausting and unproductive, with endless notifications about new opinions that often repeated what had already been said. This felt like an endless cycle of debate without resolution.

Cooperatives should not rely solely on consensus or voting. Instead, they should use consent-based decision making, which focuses on whether members object to a proposal rather than whether they agree with it. This approach acknowledges different perspectives and experiences and allows us to operate together towards a shared aim.

To improve our decision-making process, I suggest the following:

- Proposals should follow agreed guidelines. If a member is unsure how to proceed, they should consult with a working group.

- There should be separate areas for discussion and decision-making.

- Proposals should be high-level and only brought to the whole membership if they aren’t covered by an existing policy.

- We should use consent-based decision-making, asking whether people object (i.e. have critical concerns) rather than necessarily wholeheartedly agreeing.

- Our mantra should be: is this good enough for now and safe enough to try?

By adopting these solutions, we can ensure that our cooperative remains a place for productive cooperation and informed decision-making. It’s easy to become overwhelmed by discussion and debate but the cooperative movement has solved problems in this area, and I think social.coop would benefit from adopting them.

Photo by Stephane Gagnon on Unsplash