Countering misinformation in federated social networks: an introduction to the Zappa project

One thing I’ve learned from spending all of my adult life online and being involved in lots of innovation projects is that you can have the best bookmarking system in the world, but it means nothing if you don’t do something with the stuff you’ve bookmarked. Usually, for me, that means turning what I’ve filed away into some kind of blog post. It’s basically the reason Thought Shrapnel exists.

Last week I started some new work with the Bonfire team called the Zappa project. Bonfire is a fork of CommonsPub, the underlying codebase for MoodleNet.

Self-host your online community and shape your experience at the most granular level: add and remove features, change behaviours and appearance, tune, swap or turn off algorithms. You are in total control.

Bonfire is modular, with different extensions allowing communities to customise their own social network. The focus of Zappa is shaped by a grant from the Culture of Solidarity Fund.

The grant will be used to release a beta version of Bonfire Social and to develop Zappa – a custom bonfire extension to empower communities with a dedicated tool to deal with the coronavirus “infodemic” and online misinformation in general.

The announcement blog post talks of “experimental artificial intelligence engines” and “Zappa scores” which may be longer-term goals, while my job is to talk to people with real-world needs right now. As I’ve learned from being involved in quite a few innovation projects over the last 20 years, there’s a sweet spot between what’s useful, theoretically sound, and technically achievable.

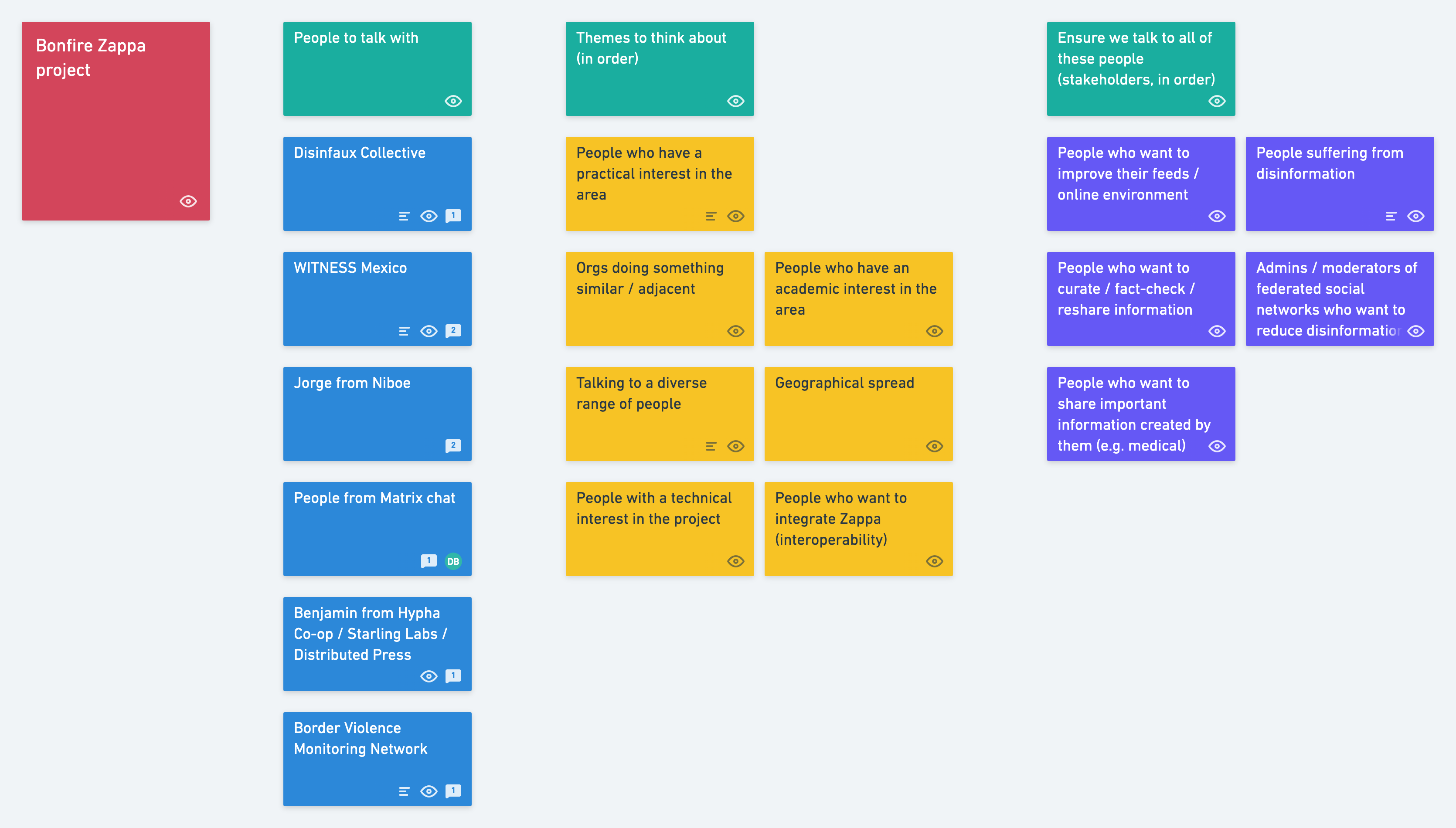

Last week, I met with Ivan to try and do some definition of user groups and the initial scope of the project. It’s easy to think that the possible target audience is ‘everyone’ but it’s of much more value to think about who the Zappa project is likely to be useful for in the near future.

The above Whimsical board shows:

- a list of people we can/should speak to (we’ve spoken with two orgs so far)

- themes of which we should be aware/cognisant

- groups of people we should talk with

The latter two lists are prioritised based on our current thinking and, as you can see, it’s biased towards action, towards those who don’t have merely an academic interest in the Zappa project, but who have some skin in the anti-misinformation game.

A note in passing: many people use ‘misinformation’ and ‘disinformation’ as near-synonyms of one another. But, even in common usage, it’s clear that they have an important difference in meaning.

We’d say, for example, that someone was ‘misinformed’, in which case their lack of having the correct information wouldn’t necessarily be their fault. On the other hand, we might talk about state actors waging a ‘disinformation’ campaign, which very much would be intentional, and probably focused on creating a mixture of fear, uncertainty, and/or doubt.

The line between misinformation and disinformation can be blurry, but it’s probably helpful to conceptualise what we’re doing in the terms of the grant: to help “empower communities with a dedicated tool to deal with the coronavirus ‘infodemic’ and online misinformation in general”.

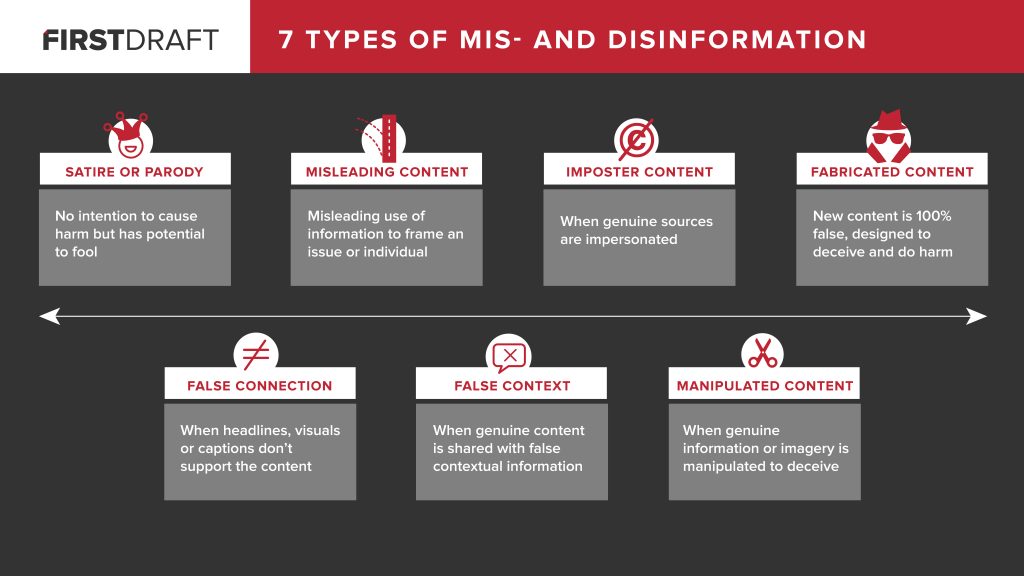

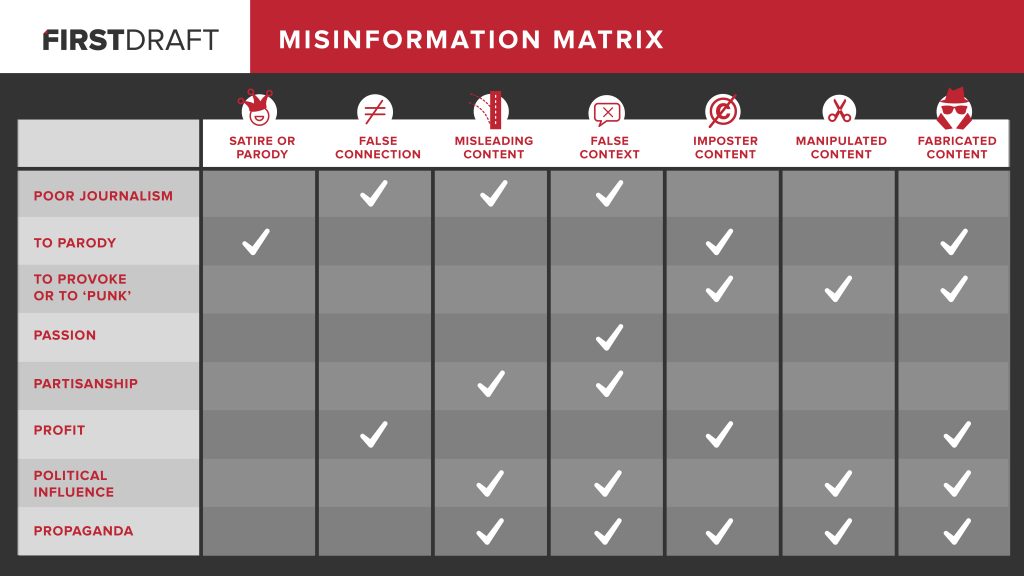

One of the resources that I’ve found particularly helpful (and which I wish I’d seen before presenting on Truth, Lies & Digital Fluency a couple of years ago) is Fake news. It’s complicated. Its author, Claire Wardle from First Draft, lays out 7 Types of Mis- and Disinformation on a spectrum from ‘satire or parody’ (which some wouldn’t even conceptualise as misinformation) through ‘fabricated content’ (which most people would definitely consider disinformation).

Some of the differences between these types can be quite nuanced, and so I found the Misinformation Matrix in the post really useful for looking at the reasons for the misinformation being published in the first place. These range from sloppy journalistic practices, through to flat-out propaganda.

What the user research we’re doing at the moment is focused upon is what types of misinformation human rights organisations, scientists, and other front-line orgs are suffering from, how and where these are manifested, and what they’ve tried to do about it.

So far, we’ve discovered that countering misinformation can be a huge time suck for people who are often volunteering for charities, non-profits, or loosely-organised groups. It seems that some areas of the world seem to suffer more than others, and particular platforms are currently doing worse than others. All of them could, of course, could do much better.

We’re still gathering people and organisations for this project. So if, based on the above, you know someone who you think it might help us to talk to, then please get in touch! You can leave a comment below, or get in contact via email.