Apple product launches as attention conservation devices

TL;DR: we use Apple’s regular product launches as a sense-check to cope with the myriad of technologies in which we could invest our time and attention.

Some background

Yesterday was another Apple product launch. Since the passing of Steve Jobs they feel less and less like the Wizard of Oz showing us behind the curtain, and more like another tech company wheeling out incremental updates while their competition catches up. This time, both Microsoft and Adobe shared the stage with Tim Cook and co, for goodness’ sake.

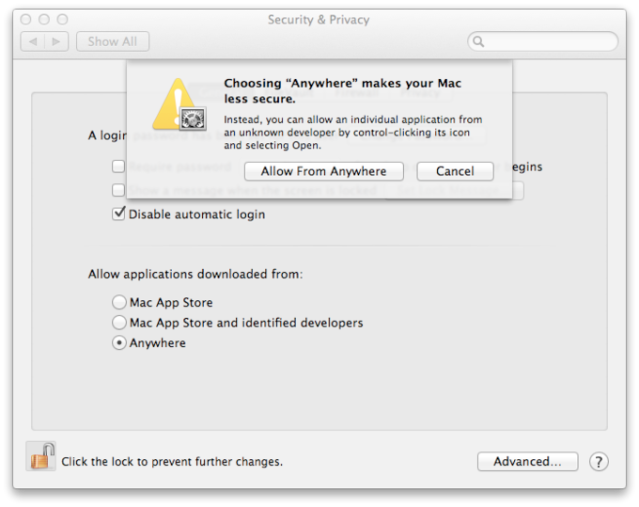

There’s been a lot of ink spilled and pixels pushed about Apple’s ‘culture of innovation’ and it’s ‘design-led principles’. People argue that you can get better value for money with other devices. Others (including me) worry about vendor lock-in. And so many people in my Twitter timeline yesterday were tweeting during the event that the features and products Apple were launching have been available on other systems for years.

But I think this is to miss the point. If you’ve got five minutes to spare, Steve Jobs explains why this is irrelevant in his answer to a question at WWDC 1997:

The point is that market leaders make opinionated choices. They put the user first and make decisions based around what’s useful for the user.

Conservation of attention

I’d argue that Apple’s product launches are now cultural artefacts. They’re included in regular news items along with world disasters and briefings about national politics. Rather than considering this as ‘entertainment news’ I think it’s perhaps more instructive to see Apple’s product launches as attention conservation devices.

Let me explain.

In the not-so-recent past, it was entirely possible for people to choose not to pay attention at all to consumer technology. It could just ‘not be for them’. They wouldn’t even feature on the technology adoption curve. People like this used to live out their lives without giving a second thought to things that others (including me) would happily choose to consider during every waking moment.

Nowadays, without a smartphone and a social network account, you’re quite likely to feel like a social pariah. As a result, you’re forced to pay some attention to consumer technology. But there’s so much of it! Thankfully, there’s an organisation that you can pay a lot of money to in order to provide a small, continually-updated, fully-supported product line that will ensure you have all of the technology you need in your life.

My favourite manufacturer, as I mentioned on the TIDE podcast this week, is actually Sony. The difference between Apple and Sony is that the latter doesn’t tell people what to pay attention to. They provide a multitude of options to fill almost any niche. I can imagine Apple’s designers having far fewer user personas than other organisations — if they use them at all.

Conclusion

If I were an academic I think I’d do some more research into this area. For instance, Apple’s never put a Blu-Ray drive into one of their machines, choosing instead to phase out physical media. As a result, they’ve done extremely well and have tied this in with developments around app stores and new/easy ways to pay for digital good. However, the mojo only lasts as long as their products are fashionable and people agree with the opinionated judgements they’re making.

Attention is a zero-sum game: we’ve only got so much of it and once it’s gone, it’s gone. By providing regular, timely, opinionated updates about the state of the field in which they’re leading, Apple not only get to make massive profits, but are the world’s de facto ‘innovation department’ — even if they didn’t invent the technologies they’re showcasing.

Image CC BY-NC-SA LoKan Sardari